Generative Engine Optimization: The Definitive 2026 Practitioner's Playbook

Move beyond the '10 Blue Links.' Learn how to optimize for RAG frameworks, information gain, and semantic density to secure high-value citations in AI-generated search results.

1. The Post-Search Era: The Death of the 10 Blue Links

The search landscape has undergone a seismic shift. In 2025, the primary gateway to information is no longer a list of links, but a synthesized response. Generative AI Overviews (AIO) and LLM-driven agents now capture the “Zero-Click” intent that once fueled top-of-funnel organic traffic [4].

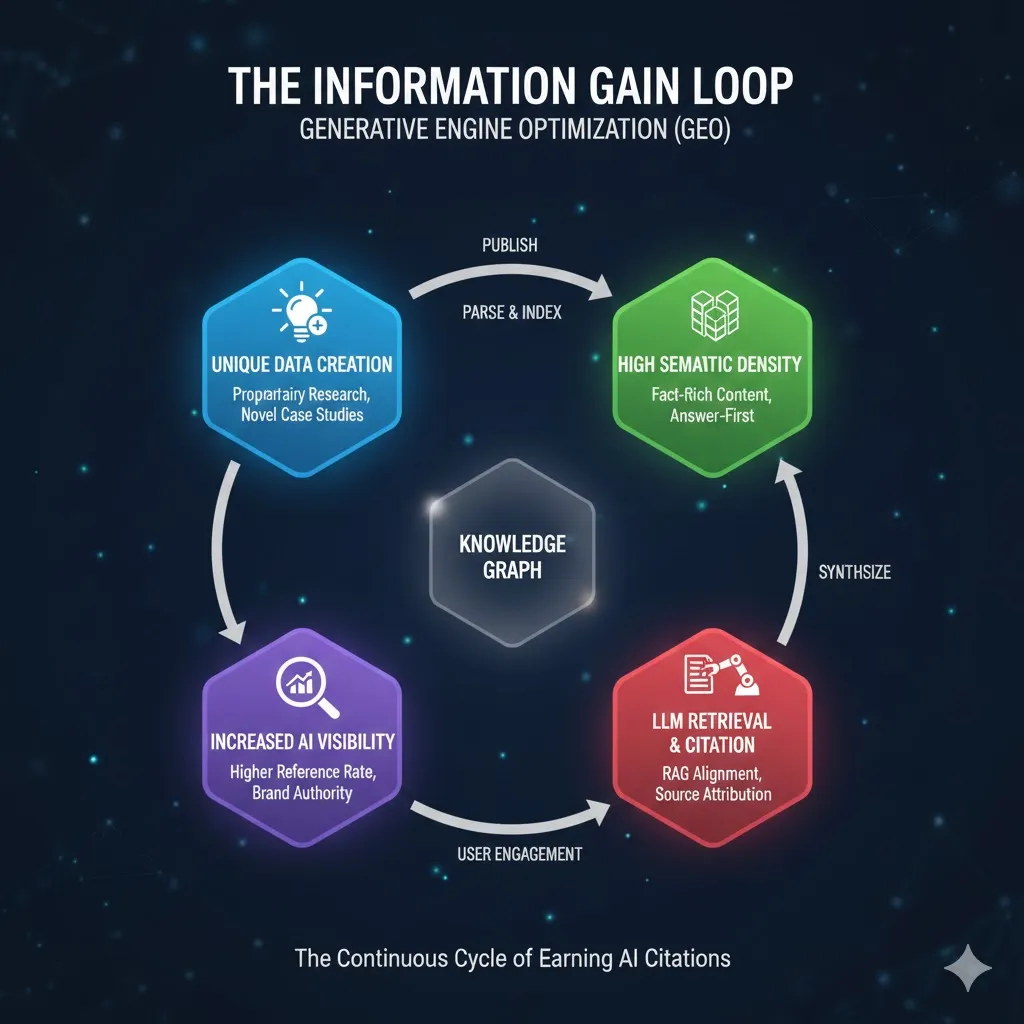

For brands, this isn’t an “SEO-pocalypse”; it’s a transition to Generative Engine Optimization (GEO). We are no longer just optimizing for a crawler; we are optimizing for a reasoning engine. The goal has shifted from “How do I rank #1?” to “How do I become the primary source for the LLM’s synthesis?” [7].

2. GEO vs. SEO: Mapping the Paradigm Shift

Traditional SEO focuses on keywords, backlinks, and dwell time. GEO, however, prioritizes verifiability and relevance density.

| Feature | Traditional SEO | Generative Engine Optimization (GEO) |

|---|---|---|

| Primary Goal | SERP Ranking | Citation Rate & Source Attribution |

| Unit of Value | Page Authority | Information Gain & Fact Density |

| Algorithm | PageRank / HCU | RAG (Retrieval-Augmented Generation) |

| Success Metric | Click-Through Rate (CTR) | Brand Mention / “Referenced by…” |

In this new ecosystem, “index bloat” is a terminal illness. LLMs prefer concise, high-signal nodes of data over long-form filler content [3].

3. The RAG Framework: How LLMs “Search” Now

To optimize for AI, you must understand Retrieval-Augmented Generation (RAG). When a user asks a question, the generative engine doesn’t just “hallucinate” an answer; it queries a vector database of indexed content to find the most relevant “chunks” [1].

As a practitioner, your content must be “chunkable.” If your data is buried in a 4,000-word fluff piece without clear headers or semantic markers, the RAG process will likely bypass it in favor of a more structured competitor. We are now optimizing for the Retriever first, and the Generator second [6].

4. Information Gain & Semantic Density: The New Ranking Factors

In a world where AI can summarize existing content in seconds, “regurgitated” content has zero value. To win the citation, your content must provide Information Gain—unique data, novel perspectives, or proprietary case studies that the LLM cannot find elsewhere [3].

Semantic density refers to the concentration of “facts per sentence.” LLMs are designed to summarize; if your content is high-signal and fact-dense, the generator is more likely to use your specific phrasing or data point as a “grounding truth” in its response [1].

5. Technical Foundation: Optimizing for the AI Crawler

Technical GEO is the 2025 evolution of technical SEO. The focus shifts from crawl budget to parsing efficiency:

- Server-Side Rendering (SSR): Ensure LLM bots (like GPTBot or Google-InspectionTool) see the full content immediately, not a blank JS-rendered page.

- llms.txt Implementation: Following the emerging standard, hosting an

/llms.txtfile at your root provides a markdown-optimized summary specifically for AI training and RAG retrieval. - Clean HTML Semantics: Use

<article>,<section>, and<aside>tags strictly. These act as “boundary markers” for RAG chunking algorithms [6].

6. The “Citation-Ready” Architecture: Answer-First Modeling

To increase your “Reference Rate,” restructure your articles using the Inverted Pyramid for AI: 1. Direct Answer: Start with a 2-3 sentence summary that answers the primary intent. 2. Evidence & Data: Follow immediately with a table, list, or unique statistic. 3. Contextual Depth: Provide the “How” and “Why” for users who click through.

Using Schema.org is no longer optional. Detailed ClaimReview and DataFeed schemas help LLMs verify your statements, significantly boosting the probability of a citation link in the AI Overview [7].

7. Community Signals: Why Reddit is Your Best GEO Ally

In 2025, LLMs have a heavy bias toward “Human-First” platforms. Data from Reddit, Quora, and niche forums are often used as the “Social Proof” layer for generative responses [8].

Practitioners must move beyond their own domains. Engaging in community discourse and earning mentions on high-authority forums creates a “Secondary Index” that LLMs query to validate brand authority. If Reddit users suggest your tool for a specific use case, the AI will likely mirror that recommendation [5].

8. Multimodal GEO: Optimizing for Non-Textual Intelligence

In 2025, generative engines are truly multimodal. They “see” images and “hear” video content as raw data points. Optimizing for GEO now includes:

- Visual Grounding: Descriptive alt-text is no longer just for accessibility; it is a grounding vector. High-quality, original diagrams (like the ones in this post) serve as “Visual Proof” that AI engines can reference in multimodal responses [5].

- Video Semantic Indexing: LLMs now use “timestamp-level” retrieval. By providing clear, structured transcripts and chapter markers, you allow the AI to extract specific insights from your video content to answer complex “how-to” queries [4].

9. Measuring Success: From CTR to “Reference Rates”

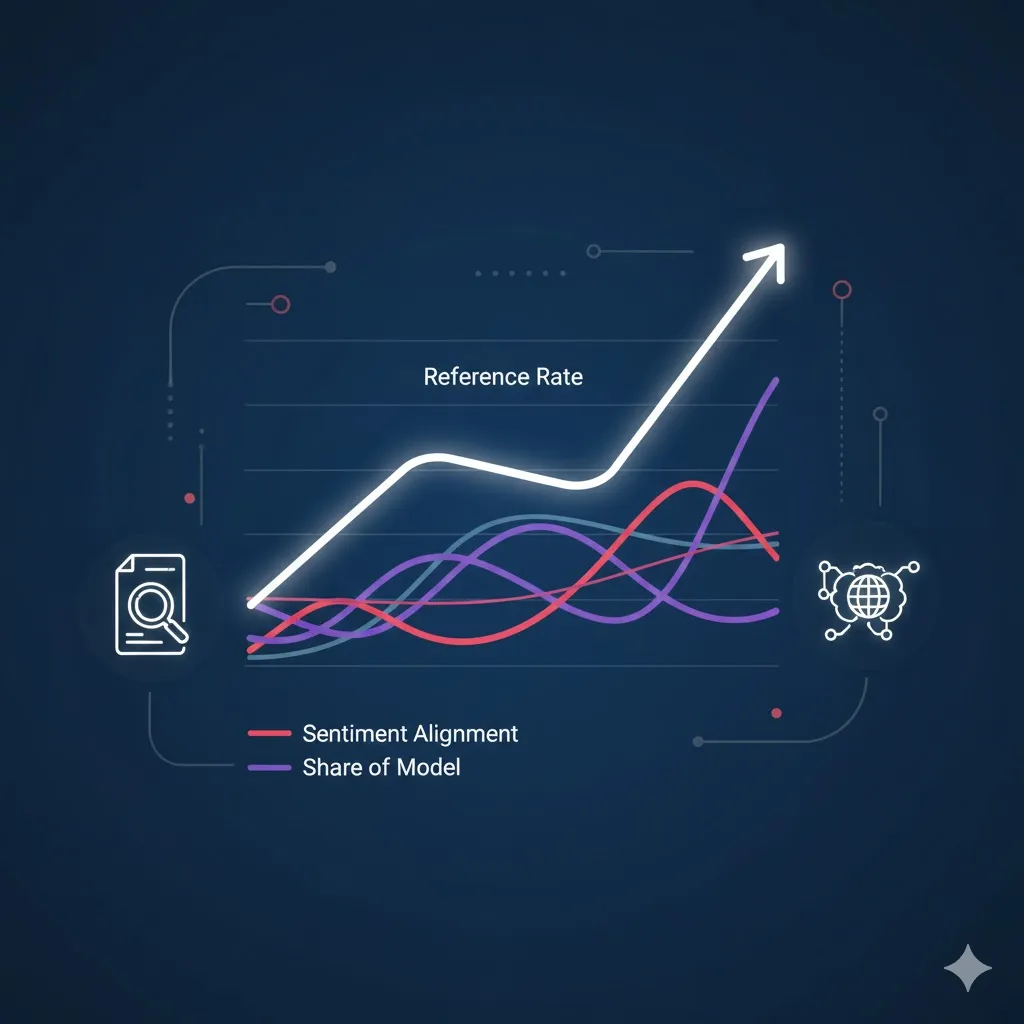

The old dashboard of impressions and clicks is incomplete. In a GEO-first world, success is measured by AI Visibility:

1. Reference Rate: The percentage of AI-generated responses for your target keywords that cite your domain as a source. 2. Sentiment Alignment: Does the LLM describe your brand using the specific “Voice” or “Authority” markers you’ve optimized for? 3. Share of Model (SoM): Analyzing how often your proprietary data appears in the training sets or RAG outputs compared to competitors [2].

10. 2026 Roadmap: The Controlled Learning Framework

To stay ahead, practitioners must adopt a “Controlled Learning” experimental framework. This involves:

- A/B Testing Content Density: Testing whether a 500-word fact-dense article earns more citations than a 2,000-word comprehensive guide.

- Source Attribution Monitoring: Using tools to track which specific sentences of your content are being “paraphrased” by AI Overviews [3].

- Synthetic Persona Testing: Prompting LLMs to see if they recognize your brand as an authority in a “vacuum” (without RAG) vs. with RAG enabled.

References & Verified Documentation

[1] GEO: Generative Engine Optimization. Aggarwal et al. Technical foundation for “selectable” content optimization.

[2] GEO Case Study: 3X’ing Leads. Practical evidence of citation-driven conversion.

[3] The Definitive Technical Guide to GEO 2025. GrowthBook. Analysis of reference rates and information gain.

[4] GEO Trends To Watch In 2026. Digital Authority Partners. Shift from links to knowledge nodes.

[5] 3 GEO Experiments for 2025. Search Engine Land. Methodology for testing LLM brand perception.

[6] GEO is the New SEO: Practical Playbook. LLM Pulse. Server-side strategies and bot monitoring.

[7] GEO Strategies 2025: Optimizing for AI Search. ClickForest. Comparison of citation vs. traditional ranking.

[8] Reddit For GEO (2025): Boost AI Citations. Wellows. Community signals as primary grounding vectors for LLMs.